Tech tutorials Azure Networking Basics

By Insight Editor / 8 May 2013 , Updated on 16 May 2019 / Topics: Microsoft Azure Networking

By Insight Editor / 8 May 2013 , Updated on 16 May 2019 / Topics: Microsoft Azure Networking

Azure networking has come a long way since Windows Azure became public. In its early days, Azure Private Virtual LAN (PVLAN) was a deep-down, "under the hood" part of the infrastructure, essentially invisible to Platform as a Service (PaaS) applications deployed in the Azure cloud.

Microsoft has since defined its PVLAN as a manageable and configurable infrastructure service that can be home to a PaaS application, an Infrastructure as a Service (IaaS) Azure Virtual Machine (VM) and an on-premise resource. Let’s take a closer look at the concepts.

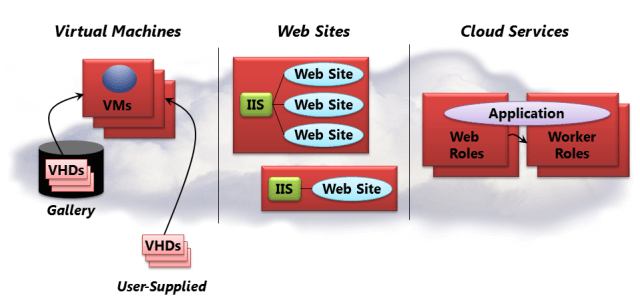

Azure PaaS applications (web role, worker role and, later, VM role) are the "regular" Azure applications that don’t store their data and internal state in their "local hard drive" storage. All data gets stored in Azure storage: blobs, tables and queues. Hence, the VMs hosting such PaaS apps can be destroyed and quickly restarted on a different VM host, which may run on an entirely different piece of hardware across the world. This description doesn’t cover all of the scenarios, but it’s the main intended use case for PaaS apps.

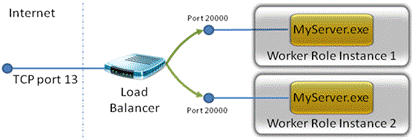

Azure PaaS applications have a predefined input endpoint (aka external or public endpoint) in their Azure configuration file, a TCP port, on which PaaS application listens for incoming requests. Azure routing and load balancing infrastructure open this port to the outside world on the Azure cloud firewall for the publicly visible {virtual} IP address (VIP) of the PaaS application.

PaaS apps can have zero or many internal endpoints (DP or TCP protocol ports), which are only visible to other PaaS roles packaged inside the same Azure service that had been deployed. The reason these roles and internal endpoints are invisible to the outside world, as well as fellow Azure tenant apps, is because Azure hosts them on its own PVLAN.

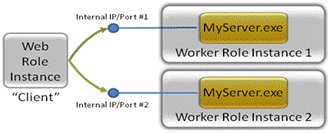

In simple terms, the addresses of the PVLAN are like a private universe, only accessible and available to the roles that live in it, and no one else. The firewall on each role is set to allow inbound requests on these internal ports. One major difference from input endpoints: There is no load balancer involved, and communication among roles on internal endpoints is direct.

A user may deploy from one to tens of thousands of PaaS app instances. To internally communicate among each other, these apps must call Windows Azure runtime API to get the list of currently deployed instances and the respective list of input and internal endpoints.

The intended scenario is for a PaaS app instance to accept outside requests on an input endpoint, and then the currently deployed instances communicate among each other on the internal endpoints. This scenario prescribes somewhat constrained internal communication architecture. That’s before considering scenarios where there’s a need for mixed communication between Azure apps and on-premise resources. To assist with the latter, enter the Azure Service Bus.

Windows Azure Service Bus is "a hosted, secure and widely available infrastructure for widespread communication, large-scale event distribution, naming and service publishing." The intended use case for the service bus is to enable Enterprise Application Integration (EAI) messaging in the cloud.

However, its capability for projecting endpoints and port forwarding can be used to enable connectivity between PaaS and on-premise resources. Early on, this was the method to enable mixed connectivity scenarios. But its unique constraints, metered message pricing and an order of magnitude increase of complexity for the overall architecture makes the Azure Service Bus an "overkill" for simple networking purposes. Today there are more flexible and cost-effective networking options.

In order to enable simpler connectivity between Azure and on-premise resources, Microsoft offers two flavors of networking solutions. The first is Azure Connect agent.

Windows Azure Connect is a point-to-point — i.e., machine-to-machine — connection. In simple terms, Azure Connect makes it appear that an Azure VM and a local Windows machine can "directly" communicate with each other without restriction.

NOTE for the inquisitive reader: Azure Connect agent creates an IPSec IPv6 tunnel over IPv4 TCP connection. The only local machine firewall requirements are to allow outbound TCP traffic for TCP 443 port (the normal HTTPS connections, permitted almost in every organization) and IPv6 ping traffic.

Azure Connect is pre-installed and configured on PaaS role VMs and only needs to be activated. What's more, both local machines and PaaS roles can be placed into an Azure Connect Group that optionally enables interconnected network among these resources. That means mobile insurance appraisers in the field or traveling sales people can be networked together, as well as Azure PaaS apps and IaaS VMs, into a virtual branch office in Azure. In addition, consider these scenarios for using Azure Connect, per Microsoft:

A common use case for Connect is having your Windows Azure web role communicate back to an on-premise SQL Server database. Or you may want to join your Windows Azure role instances to on-premise Active Directory domain (see details in the next section). Or you can use Connect to remotely administer your Windows Azure role instances, such as using the remote event viewer or remote service manager. Or you can remote access your Windows Azure role instance and copy files from a Small to Medium Business (SMB) share on a local machine. Since Connect provides low-level network connectivity, you can use it in basically any way you choose.

However, there are drawbacks. You have to install an agent on premises and on IaaS VMs. The agent software only runs on Windows Vista SP1, Windows Server 2008/R2 or Windows 7, and it needs a workaround to install on Windows Server 2012 and Windows 8. Software IPv6 tunneling carries a heavier overall network performance overhead compared to the hardware-based VPN tunneling.

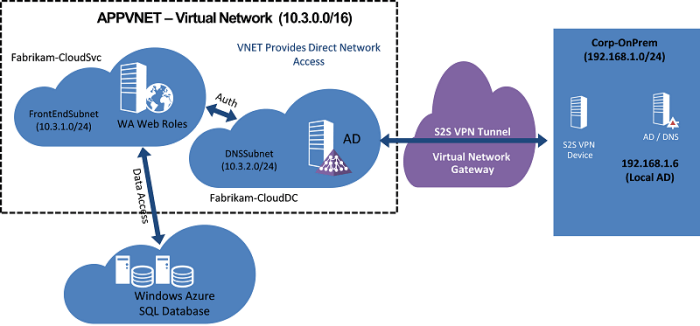

Windows Azure Virtual Network is a service that allows users to define and manage their own Private Virtual LAN. Once configured, the PVLAN can host PaaS roles and IaaS Azure VMs where the networked resources communicate among each other the same way they would on the same physical LAN. But it gets better. Microsoft then lets you join your on-premise LANs to the Azure PVLAN with a site-to-site VPN connection.

In the hybrid cloud scenario, connected resources securely communicate with each other unrestricted, yet completely isolated from other Azure tenants and their traffic. Such network configuration makes the deployed PaaS and IaaS Azure resources appear as just another branch of organization. Users can transparently extend Active Directory services, group policies or operations management, as they would in the on-premise scenarios.

Custom virtual network has one serious drawback. Azure provides name resolution services for resources deployed in the same service. However, this doesn’t cover mixed scenarios when one PVLAN hosts PaaS roles and IaaS VMs (must be separate services), or PVLAN extends to on premises, or both.

The current solution is to have a user-provided name resolution. It can be an Active Directory or a public DNS service, depending on the scenario. One needs to consider what scenarios will apply in the user-defined virtual network and understand the implications for name resolution.

There are a number of scenarios that take advantage of lighter-weight Azure Web Sites rather than traditional PaaS roles. Many users also use SQL Azure. What are the reasons for not being able to network those resources?

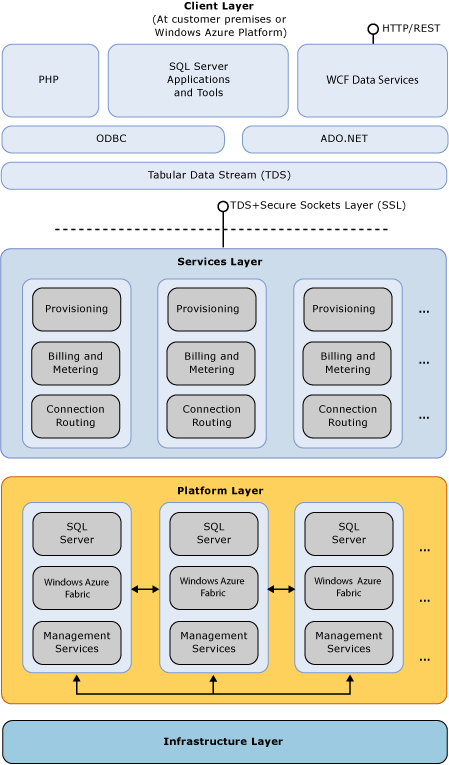

They’re actually very simple, and rooted in the nature of these services. It would be more accurate to view SQL Azure as Software as a Service (SaaS) rather than a SQL Server in the cloud. Consider this SQL Azure architecture diagram:

There’s a SQL Azure service endpoint, but there’s really no "instance endpoint" in terms of SQL Azure service. There are layers upon layers of services and network infrastructure between a SQL client and any actual SQL Server instance that happens to fulfill a given SQL client query.

But there’s no guarantee the actual SQL Server instance will remain the same for the next query. Hence, it’s not feasible to extend PVLAN across boundaries of service contracts and layers of infrastructure.

The case of Azure Web Sites is somewhat different. The Azure Web Sites differ from PaaS Web role in several ways. Azure Web Sites of different tenants can still share space and resources of a VM. Even in the situation of a reserved site, the unit of deployment and management is an IIS website, not an entire VM.

It would be quite a challenge to extend a PVLAN not just to an IP address, but to an IIS site deployed "somewhere" on a [maybe shared] VM and then completely isolate tenants and traffic. Understandably, Azure Virtual Network doesn’t allow incorporating Azure Web Sites into a user-defined PVLAN.

Understanding all of the options available in networking with Azure may seem a challenge. However, a network planned for simplicity and performance is worth the investment of time.